McKinsey’s April 2026 paper, “Building the Foundations for Agentic AI at Scale,” lands with a clarity that should resonate with every technology leader who has watched an AI pilot succeed on a whiteboard and struggle in production. The numbers are striking and instructive: nearly two-thirds of enterprises worldwide have experimented with AI agents, but fewer than 10 percent have scaled them to deliver tangible value. Eight in ten companies point to data limitations as the primary roadblock. That is not an AI problem. That is a foundation problem.

At Datafi, we have been building toward this moment for years. Not because we anticipated a McKinsey paper, but because the same principle has guided every product decision we have made: an LLM without the full context of a business is a sophisticated autocomplete engine, not a transformative one. What McKinsey is now documenting at scale, we have been resolving by design.

Nearly two-thirds of enterprises have experimented with AI agents, but fewer than 10 percent have scaled them to deliver tangible value. The bottleneck is never the model; it is always the foundation. Building a unified, semantically coherent, governed data architecture is not a precondition for AI strategy, it is the AI strategy.

The Gap Between Experimentation and Scale Is a Data Architecture Gap

The McKinsey research identifies something most enterprise AI practitioners already feel but struggle to articulate to their boards: the failure to scale agentic AI is rarely a model problem. The models are capable. The failure is infrastructural. Fragmented data, inconsistent governance, siloed pipelines, and the absence of semantic coherence across systems make it structurally impossible for an agent to operate with the confidence and consistency required for autonomous, high-stakes decision-making.

This is precisely why Datafi was built the way it was. The Business AI Operating System is not a layer bolted onto existing infrastructure. It is a vertically integrated data and AI technology stack that treats the data ecosystem, the AI layer, governance, and the user experience as a single unified system. You cannot separate these components and expect transformative outcomes. That is not a philosophical position. It is an engineering reality confirmed by what McKinsey is now observing at scale across global enterprises.

When data governance is an afterthought, agents make inconsistent decisions. When the semantic layer is absent or fragmented, agents act on conflicting interpretations of the same business reality. When the data pipeline is batch-oriented rather than continuous, agents operate on stale context. Each of these failure modes compounds the others, and together they produce the outcome enterprises keep reporting: impressive demos, disappointing production outcomes.

What “Agentify” Actually Requires

McKinsey recommends that organizations begin by identifying high-impact workflows to “agentify,” building modular data architectures, enforcing continuous data quality, and evolving governance models in parallel. It is sound advice. But the execution challenge hiding behind each of those steps is enormous, and it is one that most technology stacks were never designed to address.

Consider what agentifying a workflow actually demands. An agent operating autonomously in a demand forecasting workflow, for example, does not just need access to historical sales data. It needs real-time point-of-sale feeds, promotional calendars, external signals like weather and macroeconomic indicators, competitive pricing data, inventory positions across distribution centers, and the semantic layer that tells it how those datasets relate to one another in the context of this specific business. It needs to know that “units sold” in the ERP system and “volume shipped” in the logistics platform are not the same metric, even if they are close. It needs access to the business rules that govern how promotional lifts are calculated. And it needs to execute within a governance framework that ensures every action it takes is auditable, compliant, and reconcilable.

This is what we mean at Datafi when we say that LLMs need to know the full context of the business. Full context is not a retrieval problem. It is an architecture problem. Context requires a complete data ecosystem, a semantic foundation that encodes business meaning, and a governance model that travels with the data rather than being applied retroactively.

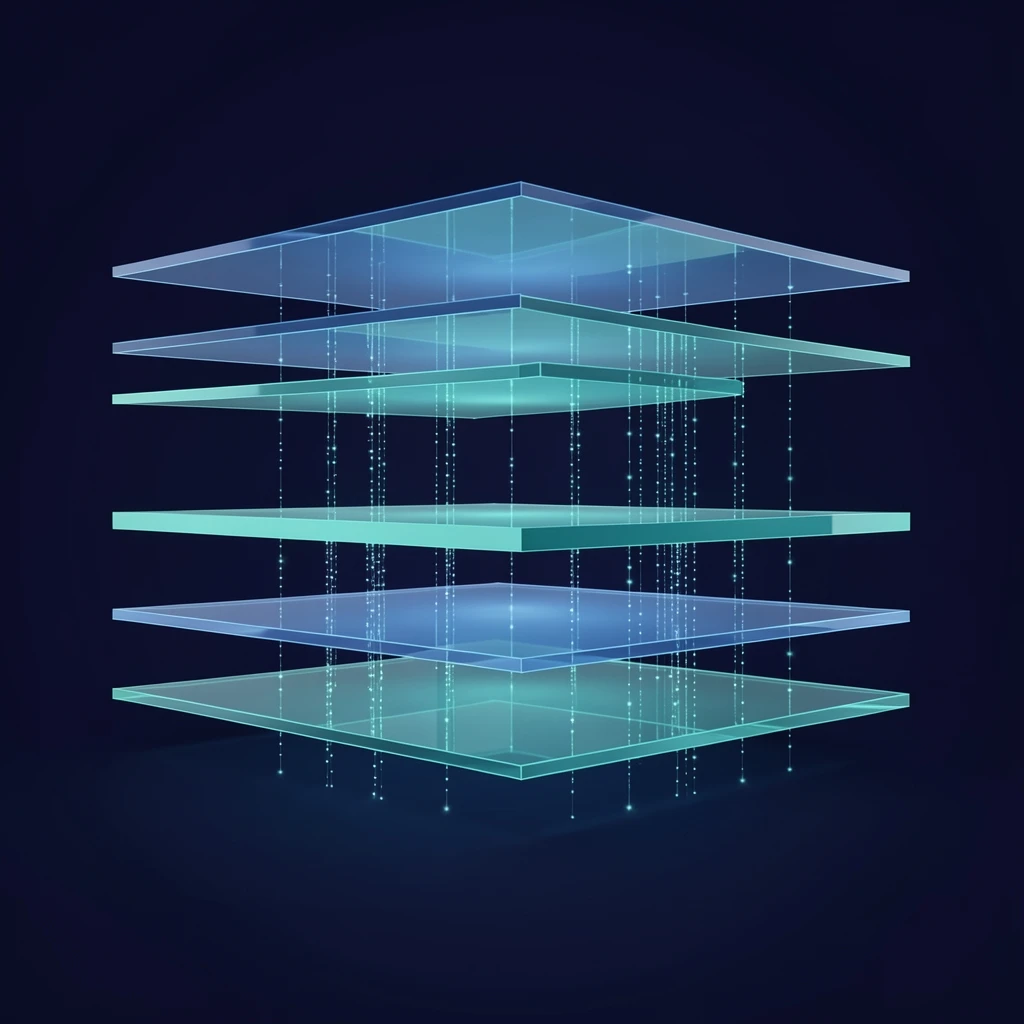

McKinsey describes this architecture in terms of layers: ingestion, the data platform, the semantic layer, data products, and consumption. The Datafi Business AI Operating System operationalizes exactly this architecture as a unified stack rather than a collection of independently procured tools. The distinction matters enormously. A collection of tools requires integration work that consumes resources, introduces latency, and creates governance gaps at every seam. A unified stack eliminates those seams by design.

The Semantic Layer Is Where Agents Become Intelligent

Of all the architectural elements McKinsey identifies, the semantic layer deserves the most attention from enterprise leaders who are serious about agentic AI. McKinsey describes it as the layer that “turns data into knowledge,” codifying business meaning into a machine-readable form. Without it, agents act on data they technically have access to but do not truly understand.

Ontologies and knowledge graphs are the implementation mechanisms here, and they are not trivial to build. They require deep subject matter expertise, careful design, and continuous maintenance as the business evolves. But they are what separate an agent that can retrieve information from an agent that can reason about it. The former answers questions. The latter solves problems.

The gap between answering questions and solving problems is not incremental. It is the difference between a search engine and a decision engine. Search engines retrieve. Decision engines act.

This distinction sits at the center of Datafi’s product philosophy. The contextual layer required to develop complex agents and autonomous workflows cannot be assembled from a prompt and a vector database. It requires the full semantic foundation McKinsey describes, built into the platform rather than reconstructed for each individual use case.

We have seen this play out with our own customers. Organizations that come to us having already invested in point-solution AI tools consistently describe the same experience: the tools work well for the use cases they were designed for, but they cannot be extended to adjacent workflows without rebuilding the context layer from scratch. Each new use case becomes another isolated pilot. Scaled transformation remains perpetually out of reach because the foundation was never built to support it.

Governance Is Not a Constraint on Agentic AI. It Is a Prerequisite.

McKinsey is direct on this point: as agentic systems scale, governance becomes the primary mechanism for control. Agents should not introduce new governance rules; they should operate within the same standards as any other enterprise system, applied automatically as autonomy increases.

This is not a compliance conversation. It is a scalability conversation. An agent that operates outside the governance framework is an agent that cannot be trusted at scale. And an agent that cannot be trusted at scale cannot be deployed in the high-value, critical-thinking workflows where the real business transformation occurs.

At Datafi, governed, compliance-ready AI is not a feature. It is an architectural principle. Access controls, lineage tracking, auditability, and policy enforcement are embedded into the platform at every layer, not managed as a separate governance overlay. When an agent retrieves data, accesses a model, or writes an output back to a business system, every action is logged, traceable, and governed by the policies the enterprise has defined. This is what allows organizations to move from supervised AI pilots to fully autonomous agent workflows with confidence.

McKinsey’s observation that agents should operate through “governed, reconcilable interfaces rather than bypassing enterprise quality control” is exactly right. The AI gateway concept they describe, controlling model access to unstructured data and enforcing usage policies, is native to the Datafi architecture. It is not an add-on. It is the foundation.

The Operating Model Must Evolve With the Stack

Technology architecture alone does not produce transformation. McKinsey’s fourth imperative, building an operating and governance model for agentic AI, points to something that Datafi customers navigate in every deployment. Human roles are shifting. The transition from execution to supervision and orchestration of agent-driven workflows is not automatic. It requires deliberate organizational change alongside the technology investment.

What makes this transition tractable is a user experience that meets people where they are. The Datafi Chat UI was designed for non-technical users precisely because the value of agentic AI cannot be confined to data science teams. When a supply chain manager can interact directly with an agent that has access to the full data ecosystem, understands the business context, and operates within enterprise governance, operational decisions improve at every level of the organization. Every employee becomes a beneficiary of the AI foundation, not just a consumer of reports generated by someone else who is.

This is what unified data experience means in practice. Not a dashboard for the data team. Not an analytics platform for power users. A conversational interface that gives every employee access to the intelligence embedded in the business’s data, governed and contextual, delivered in real time.

The Foundation Is the Strategy

McKinsey closes with a call to action: technology leaders must recognize that their data foundations increasingly define competitive positioning. The time has come to drive data transformations that pave the way for an agentic future.

We could not agree more. But we would add a clarification grounded in what we observe with customers every day. The foundation is not a precondition for AI strategy. The foundation is the AI strategy. Organizations that treat data architecture as infrastructure work to be completed before AI transformation begins will always be preparing, never arriving. The foundation and the transformation have to be built together, as an integrated system, because neither is meaningful without the other.

Datafi was built on this conviction. A vertically integrated data and AI technology stack with access to the full data ecosystem, embedded governance, and a Chat UI designed for every employee is not a technical preference. It is the only architecture capable of delivering what McKinsey describes and what enterprises genuinely need: agentic AI that operates at scale, solves hard business problems, learns continuously from business context, and creates the kind of transformative outcomes that justify the investment.

The companies that scale agentic AI will be the ones that invested in the foundation early. The companies that did not will find themselves rebuilding, one failed pilot at a time, until they arrive at the same conclusion: the foundation always wins.

Datafi is the Business AI Operating System for the enterprise. Purpose-built on a vertically integrated data and AI technology stack, Datafi unifies operational data, embedded governance, and agentic workflows in a single platform designed to give every employee access to the intelligence of the business.