A new protocol is quietly rewriting the rules of how AI connects to the world. For enterprise leaders, it signals something far more significant than a technical upgrade.

There is a quiet revolution underway in the infrastructure layer of enterprise AI, and most organizations are not yet paying attention to it. The Model Context Protocol, known as MCP, was introduced by Anthropic in late 2024. On its surface, MCP looks like a developer tool, a standardized way for AI models to connect to data sources and external systems. But what it actually represents is a fundamental architectural shift in how intelligence moves through an organization, and what becomes possible when it does.

For technology leaders who have spent the last two years wondering why their AI investments have not delivered transformative outcomes, the answer has been hiding in plain sight. The problem was never the models. The problem was the plumbing.

The real bottleneck in enterprise AI has never been model capability; it has been the infrastructure connecting those models to the full context of the business. MCP resolves this at the architectural level, making genuinely autonomous, context-complete AI possible for the first time.

The API Era and Its Limits

For decades, the Application Programming Interface has been the connective tissue of the digital enterprise. APIs made integration possible. They allowed applications to talk to one another, to share data, to compose services into products. The API economy built much of what we call modern software.

But APIs were designed for a different kind of intelligence. They were built for deterministic systems, for software that follows explicit instructions and returns predictable outputs. When you call an API, you know exactly what you are asking for and exactly what you will get back. The entire design assumes a narrow, well-scoped interaction.

Large language models do not work that way. They reason. They interpret. They need context. And when you try to force that kind of intelligence through a system built for deterministic lookups, you get exactly what most enterprises have experienced: AI tools that can answer questions but cannot solve problems.

First-wave enterprise AI products, the copilots, the search assistants, the document summarizers, were essentially wrappers around APIs. They took LLM capabilities and threaded them through existing integration patterns. The result was tools that felt impressive in demos and underwhelming in practice. They could retrieve. They could summarize. But they could not reason across the full complexity of a real business.

MCP changes this at the architectural level.

What MCP Actually Does

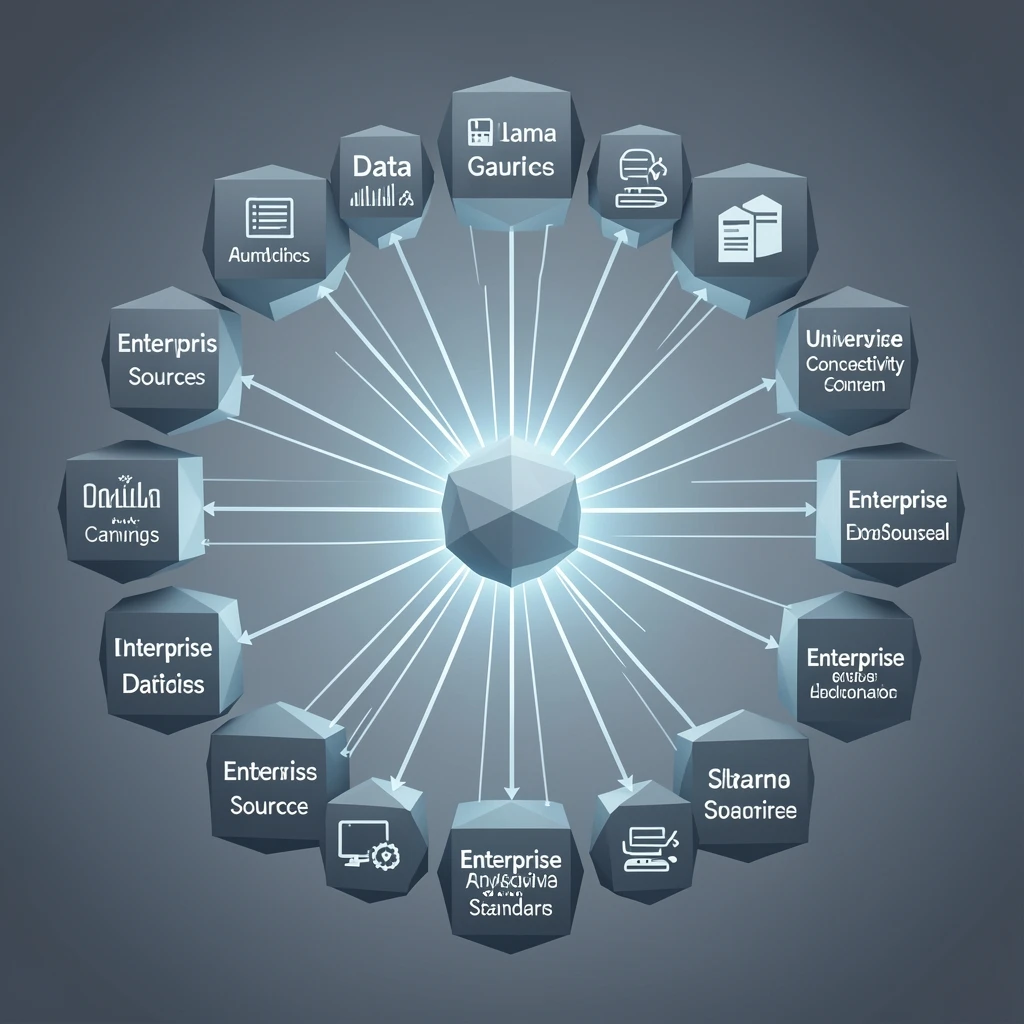

The Model Context Protocol establishes a universal, open standard for how AI models connect to data, tools, and systems. Rather than requiring custom integration work for every data source, every application, every API endpoint, MCP creates a common language that any AI model can use to discover, understand, and interact with any connected resource.

Think of the difference between a traveler who must learn a new set of customs at every border, versus one who carries a universal passport. MCP is the universal passport for AI agents.

What this unlocks is something genuinely new: AI that can operate with full situational awareness across an enterprise’s entire data and systems landscape, without the friction of bespoke integration for every connection. An AI agent operating under MCP can discover what tools are available, understand what data it can access, and take action across systems, all within a governed, auditable framework.

This is not a marginal improvement in developer productivity. This is the enabling condition for a different category of AI entirely.

The Datafi Perspective: Context Is the Product

At Datafi, we have been building toward this moment since the beginning. Our thesis has never been that AI models alone would transform enterprise operations. The model is necessary but not sufficient. What transforms an organization is AI that has access to the full context of the business.

That distinction matters more than almost any other in enterprise AI strategy today.

When an LLM is connected only to the data that was relevant for a specific, pre-scoped use case, it produces answers. Those answers may be accurate, even useful. But they are answers to the questions that humans thought to ask. They are bounded by the imagination of whoever designed the integration.

When an LLM has access to the complete data ecosystem of a business, along with the context of how that data connects, what it means, and what decisions it informs, something qualitatively different becomes possible. The AI can develop its own understanding of the business. It can surface problems that no one thought to query. It can reason across domains. It begins to solve problems rather than answer questions.

MCP is the architectural layer that makes this kind of contextual completeness achievable at enterprise scale. And it is the reason Datafi built its Business AI Operating System the way it did: vertically integrated, from the data layer through the intelligence layer, with governance embedded throughout.

Why Vertical Integration Wins

The enterprise AI market is filled with point solutions. Tools for predictive maintenance. Tools for customer experience. Tools for financial planning. Each of these tools has its own integration story, its own data model, its own governance posture. And each of them sees only a slice of the business.

This fragmentation is precisely why most organizations have struggled to move from AI experimentation to AI transformation. The intelligence is there. The data is there. But the connective tissue between them is missing.

A vertically integrated AI operating system, one that owns the data layer, the governance layer, the intelligence layer, and the user experience layer, can provide something no point solution can: a unified context layer that spans the entire business.

When Datafi’s AI agents work on a predictive maintenance problem in a rail or aviation environment, they are not just looking at sensor data from the asset being monitored. They have access to historical maintenance records, supply chain data, operational schedules, regulatory compliance requirements, and financial parameters. They can reason across all of it simultaneously. The result is not a better alert. The result is a recommendation that accounts for the full operational and business context of the decision.

The same principle applies across every high-value use case: operations optimization, passenger experience, strategic planning, workforce deployment. In each domain, the difference between useful AI and transformative AI is the same. Breadth of context. Depth of integration. Autonomy to act.

Autonomous Agents and the Contextual Layer

MCP’s emergence comes at a critical moment in the development of AI agents. The model capabilities required for genuine autonomous reasoning now exist. What has been missing is the infrastructure for those agents to develop and maintain an accurate, dynamic model of the enterprise they are operating within.

This is what we mean when we talk about the contextual layer. It is not a static knowledge base. It is not a document repository. It is a living representation of the business, its data, its processes, its policies, and its state, that AI agents can read, reason over, and act upon.

Building this contextual layer is hard. It requires solving data access problems, data quality problems, semantic alignment problems, and governance problems simultaneously. It requires a stack that treats the data ecosystem and the intelligence layer as parts of a single integrated system, not as separate tools stitched together with APIs.

For organizations in capital-intensive industries, this is the difference between AI that monitors and AI that manages. Between AI that flags anomalies and AI that prevents failures. Between AI that produces reports and AI that drives decisions.

The economic stakes are significant. In asset-heavy industries, predictive maintenance programs powered by contextually complete AI can reduce unplanned downtime by margins that directly translate to hundreds of millions of dollars in recovered value. Operations optimization programs that can reason across the full operational picture, not just individual KPIs, unlock compounding efficiencies that point solutions simply cannot reach. These are not incremental improvements. These are the transformative outcomes that board-level AI commitments were meant to deliver.

Governance Is Not the Brake. Governance Is the Accelerator.

One of the most consequential misunderstandings in enterprise AI strategy is treating governance as a constraint on capability. The implicit assumption is that the more governed and controlled an AI system is, the less it can do.

This is exactly backwards.

At Datafi, governance is embedded in the architecture from the beginning, not bolted on at the end. Every data access decision, every action an agent takes, every recommendation it surfaces, happens within a policy framework that is owned and configured by the enterprise. This is not bureaucratic overhead. It is the foundation of trust that makes genuinely autonomous AI deployment possible.

For business leaders, this reframes the governance conversation entirely. The question is not how to control AI so it does not do harm. The question is how to build governance structures robust enough that AI can be trusted to do more.

The Human Experience of AI Transformation

There is one more dimension that technical architecture alone cannot address, and it is the one that ultimately determines whether AI transformation happens or not: the experience of the people doing the work.

Every efficiency gain, every cost reduction, every strategic insight that AI makes possible has to pass through a human being at some point. And most enterprise AI tools today are designed for the minority of workers who are technical enough to interact with raw AI capabilities, not the majority who need AI embedded in the workflows they actually use.

Datafi’s approach to this problem is a chat-based interface designed specifically for non-technical users, connected to the full power of the underlying AI operating system. The goal is simple: every employee in the organization, from the front line to the executive suite, should be able to access the intelligence they need in the context where they need it, without needing to know anything about the systems underneath.

This is not a nice-to-have. It is the difference between an AI strategy that reaches 10 percent of an organization’s people and one that reaches all of them.

What Comes Next

MCP will not remain a niche developer concern. As it becomes the standard protocol for AI-to-system connectivity, it will reshape the architecture of enterprise software the same way REST APIs did in the previous decade. Organizations that build their AI strategies on MCP-native infrastructure today will have a compounding advantage as the protocol matures and the ecosystem of connected capabilities grows.

The deeper shift is philosophical. For the last two years, enterprise AI has largely been a story about making existing work easier. Better search. Faster summarization. Smarter autocomplete. These are real improvements, but they are not transformation.

Transformation happens when AI can hold the full context of a business in its awareness, reason across that context autonomously, take meaningful action, and learn from the outcomes.

The era of APIs as we have known them is ending. The era of contextual, autonomous, enterprise-grade AI is beginning. The organizations that recognize this shift early, and invest in the integrated infrastructure required to capture it, will define what enterprise performance looks like for the next decade.

The question is not whether to build for this future. The question is how much runway you have left before your competitors do.

Datafi’s Business AI Operating System is purpose-built for organizations ready to move from AI experimentation to AI transformation. To learn how Datafi enables unified data experience, autonomous workflows, and enterprise-grade AI governance, contact our team.